Zero To Hero Using Terraform on AWS

Bloomberg posted news: “There Are Now 1,000 Unicorn Startups Worth $1 Billion or More”. Many of them adopted Cloud and Infrastructure as Code (IaC) concepts to increase flexibility and speed during software development.

Hello, my name is Aleksandar Nasuovski and I work as a Head Of DevOps @Blinking. As an IT engineer, I’m certain that you have heard and adopted the IaC concept to your daily routine that gives agility and speed during provisioning cloud providers services. Besides ephemeral “people pointing clicks in a console” and provision virtual machines, Wikipedia describes IaC as a “process of managing and provisioning computer data centers through machine-readable definition files”.

To keep it simple, let’s take components of a simple application stack: load balancer, web server hosted on virtual machines and database, define configuration file using a simple text editor and store it on Version Control Systems. VCS has seen great improvements over the past few decades and some are better than others. One of the most popular VCS tools in use today is called Git. Without files stored on Git may find inconsistency problems between environments and deployed application versions. Ability to have one source of truth during maintaining infrastructure resources and collaborate with others on a project.

With the files committed on Git we were ready to pick up a tool for managing a cloud infrastructure.

The focus of this story is Terraform by HashiCorp, a tool similar to AWS CloudFormation that allows you to create, update, and version your Amazon Web Services (AWS) infrastructure.

- Terraform is a tool that can securely and efficiently build, change, and version management infrastructure. It can also provide custom solutions while managing popular service providers.

- Terraform is driven by configuration files that define the components (infrastructure resources) to be managed. Then Terraform generates a plan and executes this plan to create, incrementally change, and continuously manage the defined components. If this plan cannot be executed, an error is returned.

- Terraform can manage IaaS-level resources, such as computing instances, network instances, and storage instances, but it can also manage higher-level services, such as DNS records and features of SaaS applications.

We say “Terraform, welcome to Blinking”

Not long ago, Blinking had a challenge to answer whether Terraform is a good approach. We will explore several functionalities or criteria that the tool we were looking to use had to meet…

So how does Terraform know which infrastructure it’s responsible for?

Terraform stores information about created Cloud infrastructure resources inside a state file with extension *.tfstate. File contains a custom JSON format that records a mapping for the created records.

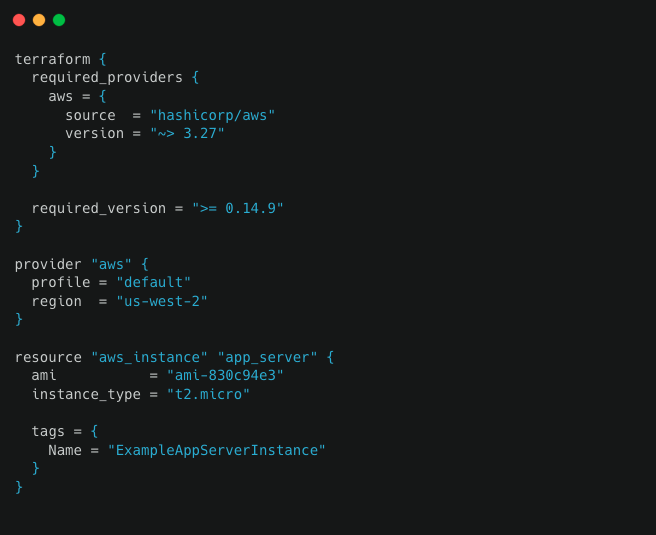

During the test phase, we first followed the main guidelines on how to set up Terraform binary and create a sample config definition.

Terraform template is the following:

The definition contains all of the necessary components needed to deploy resources to the cloud infrastructure.

The following sections will review each block of this configuration in more detail.

The terraform {…} block contains Terraform settings, including the required providers Terraform will use to provision your infrastructure.

The provider block configures the specified provider, in this case, AWS. A provider is a plugin that Terraform uses to create and manage your resources.

Resource blocks define components of the infrastructure. A resource might be a physical or a virtual component such as an EC2 instance, or it can be a logical resource such as a Heroku application.

Looks great. Terraform has created a resource and file with tfstate extension inside the project as we described inside the definition store.

While storing objects in local files, we noticed several issues that we needed to resolve before general use.

Out-of-date state file stored on a local shared drive

Keeping tfstate data files locally may generate issues with out-of-date state files because each team member needs to be informed about the latest file version. As a result team members may accidentally roll back or duplicate previously created cloud resources.

As a solution, Terraform has the option to write state data to a remote data store, which can then be shared between all members of a team. As we already use Amazon Cloud provider we placed our bets for a remote backend because of the following:

- Supports encryption

- Managed service

- Claim 99.999999999% durability and 99.99% availability

- Supports versioning

- It’s inexpensive to keep objects in S3

Here is a short configuration story on how to provision bucket for the remote state.

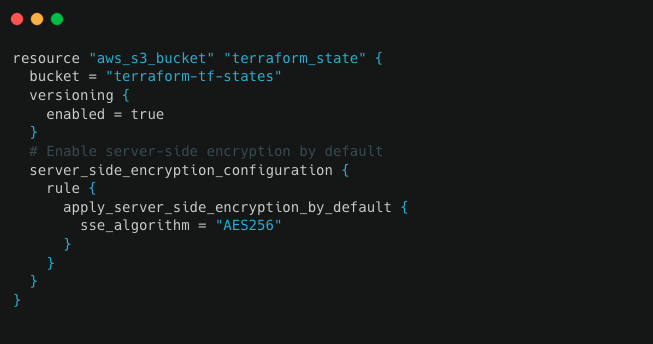

To enable a remote state with S3, the first step is to create an S3 object storage bucket. Create a definition config file main.tf inside a unique folder name.

At the beginning of the file we need to define the AWS region.

Next we need to define an S3 bucket resource. Later we will use that bucket for keeping tfstate data. Example below contains resource definition arguments:

bucket: Name of S3 bucket.

versioning: Enables versioning so we can see the full revision history of our state file during troubleshooting.

server_side_encryption_configuration: This block ensures that your state files are always encrypted on the disk when stored in the S3 bucket.

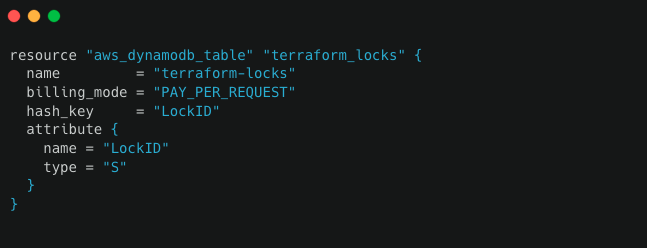

Locking

For fully-featured remote backends, Terraform can also use state locking to prevent concurrent runs of Terraform against the same state. To use the locking option, Terraform requires a Dynamodb table that has a primary key called LockID. Main.tf file that we created earlier needs to contain the additional lines:

Cloud agnostic

First and foremost requirement was to be cloud agnostic? Answer is Yes!

Terraform allows a single configuration to be used to manage multiple providers. It even covers cross-cloud dependencies.

Simple to use

Product is well documented and great additional help can be received from community support.

Additional references:

https://www.terraform.io

https://aws.amazon.com/s3/